Preservice teachers’ beliefs have a large influence on their choice to use technology in their teaching (Chen, 2010). In science teaching, one of the most widely used forms of technology is computer simulations. The learning effects of simulations in secondary level science education have been extensively documented (Rutten, van Joolingen, & van der Veen, 2012). Less research is available on primary-age learners and simulations (Zacharia, Loizou, & Papaevripidou, 2012). At the primary level the best learning effects are achieved by combining learning with computer simulations and learning with laboratory experiments, compared to both methods on their own (Jaakkola & Nurmi, 2008).

When looking at the use of simulations at a curricular level, the Next Generation Science Standards (NGSS; NGSS Lead States, 2013) in the US are based on the Framework for K-12 Science Education (Schweingruber, Keller, & Quinn, 2012). This framework identifies eight scientific and engineering practices that should be promoted in science classrooms. Of those eight practices, two are closely related to learning with simulations: “developing and using models” and “using mathematics and computational thinking.”

This study reports an intervention carried out with preservice primary teachers regarding the use of simulations in science teaching. The aims of the study were (a) to study the preservice teachers’ beliefs about their technological, pedagogical, and content knowledge (TPACK), measured through self-assessment before and after the intervention and (b) to find out the possible connections between preservice teachers’ beliefs regarding the different domains of knowledge and their attitudes toward simulations in science teaching.

First to be considered in this paper are the role of beliefs, in general, in technology integration, which is followed by discussion of the TPACK framework. Last is an argument that self-assessing TPACK is a way to study beliefs.

Background

Defining Beliefs and Attitudes

In a study of beliefs and attitudes, the concepts need to be defined. Koballa (1989) stated that beliefs link objects and attributes together. An example of a belief would be “Using computers (object) is hard (attribute).” We define attitudes similarly to Zacharia (2003), as mental concepts that depict favorable or unfavorable feelings toward a person, group, policy, instructional strategy, or particular discipline. An example of an attitude is, “I don’t like computers.” According to Koballa, a person has more beliefs than attitudes.

In this paper, the preservice teachers’ views on the usefulness of simulations in science teaching and their dispositions toward integrating simulations in their teaching are seen as different domains of their attitude toward simulations, because both of these constructs reflect their favorable or unfavorable feelings toward simulations.

Teacher Beliefs, Attitudes and Technology Integration

Moving from defining beliefs into research on teacher beliefs, the role of them in technology integration in teaching has been under research during the last decade. Regarding preservice teachers, their self-assessed technological skills, teacher training program experiences, and beliefs about the usefulness of technology in teaching and learning influence their choice to use technology in teaching (Chen, 2010).

Abbitt (2011) studied the connection between preservice teachers’ self-assessed knowledge related to teaching with technology and their beliefs in their ability to use technology in their teaching. His results suggested that improving preservice teachers’ knowledge regarding teaching with technology may result in increased beliefs in their ability to teach efficiently using technology. With in-service teachers, those teachers who had successfully integrated technology in their teaching reported internal factors, such as having a passion for technology and having a problem solving mentality, as important factors in shaping their practices for using technology (Ertmer, Ottenbreit-Leftwich, Sadik, Sendurur, & Sendurur, 2012).

Concerning beliefs and attitudes relating to teaching science with simulations, Zacharia (2003) studied both preservice and in-service teachers’ beliefs about the advantages and disadvantages regarding the use of computer simulations in science education. His results showed that teachers’ attitudes toward using simulations in science teaching were related to their beliefs about the positive learning outcomes of using simulations.

Zacharia, Rotsaka, and Hovardas (2011) came to the same conclusion in their study with in-service teachers, but they also found a possible connection with beliefs about the usefulness of simulations in teaching and the attitude toward using simulations. Kriek and Stols (2010) listed the perceived usefulness and compatibility of simulations, expectations of colleagues, and teachers’ general technological proficiency as being connected with simulation usage.

Overall, teacher beliefs are seen as important factors when looking at technology integration, both with in-service (Ertmer et al., 2012) and preservice teachers (Chen, 2010). Teacher beliefs are even seen as more influential in teaching than is teacher knowledge (Pajares, 1992). In addition, professional development programs that do not take into account teacher beliefs and attitudes have been unsuccessful (Ryan, 2004; Stipek & Byler, 1997).

This study aimed to add to the literature about ways that preservice teachers’ beliefs in different domains measured through self-assessed knowledge are connected with their attitudes toward simulations. The TPACK framework was used to study the preservice teachers’ beliefs on technology in science teaching.

The TPACK Framework

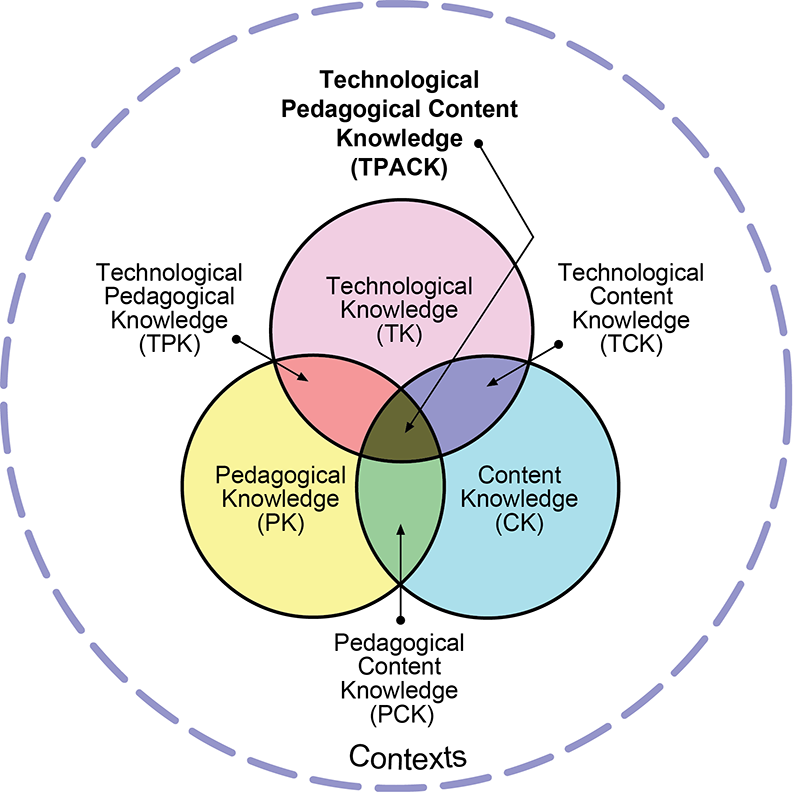

The TPACK framework was introduced by Koehler and Mishra (2005), who originally used the term “technological pedagogical content knowledge,” or “TPCK.” The TPACK framework aims to integrate technology into the same framework as pedagogy and content (Mishra & Koehler, 2006). This integration is supported by research suggesting that learning only technological skills do not prepare teachers and educators to integrating technology in their content-specific teaching (Lawless & Pellegrino, 2007). One way to illustrate the TPACK framework is by using a Venn diagram (see Figure 1).

Here, pedagogical knowledge (PK), content knowledge (CK), and technological knowledge (TK) are presented as three circles. In the intersections of the circles are pedagogical content knowledge (PCK), technological content knowledge (TCK) and technological pedagogical knowledge (TPK). In the middle, where all three circles intersect, is the technological pedagogical content knowledge. In this paper, these forms of teacher knowledge are referred as the domains of the TPACK framework.

Critique of the TPACK Framework

The TPACK framework in the form formulated by Mishra and Koehler and represented in Figure 1 has been criticized. Brantley-Dias and Ertmer (2013) criticized the framework as having too many domains of knowledge that are impossible to distinguish from each other. Archambault and Barnett (2010) also raised the issue that separating the domains from each other is difficult. They reported that in their survey study teachers placed items into different domains than the researchers had intended, which raised a question about the existence of all of these knowledge domains in practice.

Even when taking into account the criticism, however, the TPACK framework has succeeded in bringing attention to the need to consider how teachers’ knowledge of technology can support students’ learning (Brantley-Dias & Ertmer, 2013). The framework offers a chance for researchers, teachers and educators to conceptualize how technology can improve teaching and learning (Archambault & Barnett, 2010).

Preservice Teachers’ TPACK

The critique of the TPACK framework and the issue of distinguishing the different domains of knowledge from one another can be especially noteworthy with preservice teachers, who might not have the necessary understanding to categorize their knowledge into the different TPACK domains (Chai, Ling Koh, Tsai, & Lee Wee Tan, 2011). The still-forming TPACK of preservice teachers has been described as emerging TPACK (Ozgun-Koca, Meagher, & Edwards, 2010) or proto-TPK (Kontkanen et al., 2014).

Preservice teachers even unknowingly bring their own technological experiences from their education, free time, and university courses to their teaching (Kontkanen et al., 2014). These previous experiences affect the way the preservice teachers plan and assess the use of technology in their teaching as well as in the development of their TPACK (Hofer & Grandgenett, 2012; Hofer & Harris, 2010; Ozgun-Koca et al., 2010). Maeng, Mulvey, Smetana, and Bell (2013) suggested that science teaching method courses should emphasize the role of technology in supporting pedagogical approaches in teaching the specific content.

Soon after the TPACK framework was developed, it became obvious that ways to measure TPACK empirically were needed (Koehler, Shin, & Mishra, 2012). Perhaps the most widely used survey for preservice teachers to self-assess their TPACK is the Survey of Preservice Teachers’ Knowledge of Teaching and Technology (Schmidt et al., 2009). Schmidt et al.’s survey has been shown to have acceptable validity and reliability in modifications (Chai, Koh, & Tsai, 2010; Koh, Chai, & Tsai, 2010).

Lin, Tsai, Chai, and Lee (2013) took the Survey of Preservice Teachers’ Knowledge of Teaching and Technology and revised it to fit preservice science teachers. Lin et al.’s survey consisted of 27 items divided into the seven TPACK domains. The researchers reported satisfactory validity and reliability for their survey. Zelkowski, Gleason, Cox, and Bismarck (2013) took the survey by Schmidt et al. and modified it for preservice secondary mathematics teachers. Zelkowski et al. reported good internal reliability for the test but noted that they were not able to produce meaningful factors for the PCK, TPK and TCK domains, which is consistent with the previously discussed issue of separating the domains of TPACK from one another. The final survey by Zelkowski et al. (2013) contained 22 items restricted only to the TK, CK, PK, and TPACK domains.

Self-Assessed TPACK as a Way to Study Beliefs

Self-assessment instruments for teachers’ TPACK have been argued to measure preservice teachers’ personal beliefs about their knowledge (Zelkowski et al., 2013) and in-service teachers’ confidence (Graham et al., 2009). In general, Pajares (1992) stated that knowledge and beliefs are inextricably intertwined.

We argue the same: Using self-assessment to study preservice teachers’ knowledge in different domains is also a way to study their personal beliefs in these domains. One’s own assessment of one’s knowledge in an area is intertwined with one’s personal beliefs related to that area, because beliefs also have a cognitive component dealing with context-specific knowledge (Herrington, Bancroft, Edwards, & Schairer, 2016). Thus, the preservice teachers’ self-assessment of their knowledge in the different TPACK domains may be used as a method of measuring their beliefs related in these domains. This study moves the literature forward in taking into account both the research of teachers’ beliefs regarding technology and the research related to the TPACK framework by using preservice teachers’ self-assessed TPACK as an indicator of their beliefs in the different domains.

Study Aim and Research Questions

The first aim of this study was to gain insight into developing preservice primary teachers’ self-assessed TPACK in science through an intervention in which they were acquainted with using simulations in science teaching. The second aim was to study the possible connection of preservice teachers’ beliefs measured through their self-assessed knowledge in the different domains of the TPACK framework with their attitudes toward simulations. The results of this study can be used to develop the teaching related to simulations in science teaching during preservice teacher training.

The research questions and hypotheses are as follows:

- How do primary school preservice teachers’ TK, PK, CK, and TPACK related beliefs measured through self-assessment differ when comparing the results before and after the intervention?Based on the design of our intervention, we expected the preservice teachers’ beliefs of their knowledge to improve in the CK and TPACK domains.

- How do preservice teachers’ beliefs measured through their self-assessed knowledge in the different domains of the TPACK framework affect their views on the usefulness of simulations in science teaching?According to Teo (2009) preservice teachers’ self-assessed computer using skills had an effect on their assessment of usefulness of technology in teaching. Thus, we expected the preservice teachers’ beliefs related to TK to correlate with their views on the usefulness of simulations in science education.

- How do preservice teachers’ beliefs measured through their self-assessed knowledge in the different domains of the TPACK framework affect their disposition toward integrating simulations into teaching?According to Ertmer et al. (2012) teachers’ passion for technology affects their technology integration practices. Also, Abbitt (2011) showed that the only domain of the TPACK framework that predicted their confidence in their ability to integrate technology into teaching before and after their intervention was the preservice teachers’ TK. Thus, we expected the preservice teachers’ beliefs related to TK to correlate with their attitudes toward integrating simulations into their science teaching.

Study Context and Participants

The study was conducted as an intervention that was a part of a science pedagogy course in the primary teacher program at University of Jyväskylä in Finland. Preservice teachers enrolled in the course were offered the chance to participate in the intervention. All (n = 40) agreed to participate voluntarily, but only 36 of them completed all of the study instruments.

The preservice teachers were between the ages of 20 and 42, with the average age being 24.2. Thirty-one of them were female and five were male, the typical gender distribution in Finnish primary-level teacher education. Twenty-three preservice teachers reported having less than 6 months of teaching experience, and one reported having more than 2 years of experience. The rest had teaching experience ranging between 6 months and 2 years.

The preservice teachers were in different phases of their studies, ranging from their second year to the fifth year of the 5-year master of education program. The preservice teachers majored in special education, but they had chosen primary teacher studies as their minor. Only one preservice teacher of the 36 had previous experience using simulations in science learning or teaching, so the intervention served as an introduction to simulations.

Study Design and Methods

The study used a single-group pretest-posttest design to study the possible changes in the preservice teachers’ beliefs related to TPACK over time. The data was collected before and after the intervention focusing on using simulations in science teaching.

Measuring Preservice Teachers’ TPACK With Self-Assessment

The TPACK survey used in this study (Appendix A) was adapted from Lin et al.’s (2013) and Zelkowski et al.’s (2013) studies. The items about different areas of mathematics (algebra, geometry, etc.) in Zelkowski et al.’s (2013) study were changed to items about different science subjects, since these subjects (physics, chemistry, biology, and geography) are taught together in Finnish primary schools from grades 1 to 6 and they are covered in the same science methods course as a part of the primary teacher training program at University of Jyväskylä.

The final survey contained 29 seven-level Likert items (28 positively phrased and 1 negatively phrased) divided into four knowledge domains, CK, PK, TK, and TPACK. The decision not to include the three other knowledge domains from the TPACK framework was based on Brantley-Dias’s and Ertmer’s (2013) critique to the TPACK framework and the fact that our survey instrument was adapted from Zelkowski et al. (2013), who could not produce meaningful factors for these domains.

Example items, the number of items per TPACK domain, and Cronbach alphas for the pre- and posttest are presented in Table 1. All scales on the pre- and posttests met the threshold criteria commonly adopted of Cronbach alpha > .80, indicating good reliability. The survey was administrated via an online form before the intervention and after the preservice teachers had taught their lesson.

Table 1

Example Items From the TPACK Survey and the Reliability of Its Domains

TPACK Domain | Example Item | No. of Items | Cronbach’s Alpha – Pretest | Cronbach’s Alpha – Posttest |

| CK | I have various strategies for developing my understanding of science. | 7 | .87 | .87 |

| PK | I am able to help my students to monitor their own learning. | 8 | .87 | .92 |

| TK | I know how to solve my own technical problems when using technology. | 8 | .93 | .93 |

| TPACK | Integrating technology in teaching science will be easy and straightforward for me. | 6 | .91 | .90 |

| Overall | 29 | .94 | .94 |

Measuring Preservice Teachers’ Attitudes Toward Simulations in Science Teaching

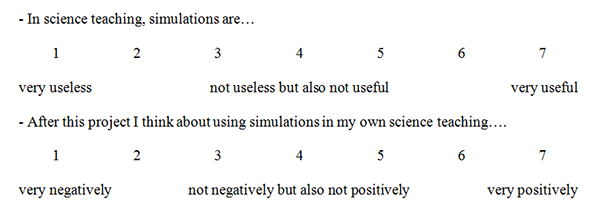

The data for the preservice teachers’ views on the usefulness of simulations in science teaching and their disposition toward integrating simulations in their science teaching was collected by 7-point Likert-scale items (Appendix B). These data were collected by paper during the last intervention meeting.

The Course of the Intervention

The 40 preservice teachers took part in the intervention in two groups of 20 teachers each. The intervention was implemented during 8 weeks, consisting of group meetings, lesson planning, and teaching a lesson. The intervention started with five weekly meetings that lasted 90 minutes each. These meetings revolved around inquiry-based teaching of science with simulations. During and between these meetings, the preservice teachers planned a physics lesson for the primary level in which they used simulations.

Physics was chosen as the subject because of the abundance of simulations that can be used in primary level physics. The lessons were planned and taught in groups of five. After the lessons all 40 of the preservice teachers participated in a final meeting that lasted 3 hours. The content of all the meetings was the same for both groups. Between the meetings, the preservice teachers worked on their own lesson planning. The course of the intervention is presented in Table 2.

Table 2

The Course of the Intervention

Week | Event /Meeting | Content of the Meeting |

| 1 | TPACK pretest | |

| 1 | Inquiry learning | Introduction to the basics of inquiry learning in science. |

| 2 | Simulations in science education | Introduction to the research results concerning the use of simulations and a chance to try some PhET simulations. |

| 3 | Dividing the subgroups and handing out lesson topics | The two groups of 20 preservice teachers were each divided into four groups of five. These subgroups were each given a topic to plan a physics lesson about in which they had to use a given PhET simulation. |

| 4 | Planning the lessons | The subgroups revised the science content of their topic and presented their preliminary lesson plans. |

| 5 | Planning the lessons | The subgroups presented their final lesson plans. |

| 6 – 7 | Teaching the lessons | |

| 7 | TPACK posttest | |

| 8 | Final meeting and end survey (Appendix B) | The preservice teachers had a chance to share their feelings and experiences on teaching with simulations with their peers. |

The topics for the lessons taught by the preservice teachers were based on the Finnish national curriculum and were decided by the teachers of the two primary schools in which the lessons were taught. The simulations used were also decided together with the teachers to suit the progress of their pupils in their science studies. The simulations chosen for the lessons were all Physics Education Technology (PhET) simulations (retrieved from http://phet.colorado.edu/en/simulations/), which were chosen because simulations were available for each science subject and for mathematics. The PhET simulations were, thus, a good resource for primary school teachers, who must teach every science subject. The pupils in these schools ranged from third graders (10-year-olds) to sixth graders (13-year-olds). The lesson topics and simulations used are presented in Table 3.

Table 3

The Topics of the Lessons and PhET Simulations Used by the Preservice Teachers in the Lessons

Topic | PhET Simulation Used |

| Static Electricity | Balloons and Static Electricity |

| Forms of Energy | Energy Skate Park: Basics. |

| DC Circuits | Circuit Construction Kit (DC Only) |

| Balance | Balancing Act |

Data Analysis

Individual Likert scale items should be considered an ordinal level of measurement, but combining multiple Likert scale items into one construct allows the construct to be treated as an interval level of measurement (Carifio & Perla, 2007, 2008). A one-sided paired sample t-test was used to answer Research Question 1. A one-sided test was used because it can be assumed that the change is positive when comparing the answers on the pre- and posttests.

The linear correlations between the different domains of knowledge can be studied using Pearson’s product-moment correlation coefficient (Pearson’s r; Carifio & Perla, 2007), but the correlation between the preservice teachers’ beliefs in the different domains and their attitudes toward simulations should be measured using, for example, Spearman’s rank correlation coefficients (Spearman’s ρ), because the data from individual Likert items is ordinal in nature (Carifio & Perla, 2008). In this paper, both types of correlation coefficients are presented in a single table for reasons of clarity, even though they are not meant to be compared with each other.

The analysis for Research Questions 2 and 3 depended on the preservice teachers’ answers to individual Likert scale items. Some researchers generally avoid this approach in research (Carifio & Perla, 2007), but others state that in some cases a single-item measure can be used (Gardner, Cummings, Dunham, & Pierce, 1998; Wanous, Reichers, & Hudy, 1997). Trying to come up with different items or synonyms to measure the same construct (e.g., preservice teachers’ views of the usefulness of simulations) may have increased the chance to include items that are not proper synonyms of, for example, usefulness, which is a problem raised by Drolet and Morrison (2001).

Results

The results are presented in three sections, each based on one of the research questions.

Self-Assessed TPACK Before and After the Intervention

The possible relations between the knowledge domains and their relative stability were studied by calculating their Pearson’s product-moment correlation coefficients (Table 4).

Table 4

Correlation Coefficients for the Domains of Knowledge

| Area of Study | 1.[a] | 2.[a] | 3.[a] | 4.[a] | 5.[a] | 6.[a] | 7.[a] | 8.[a] | 9.[b] | 10.[b] | ||

| 1. CK – pre[a] | – | |||||||||||

| 2. PK – pre[a] | .43** | – | ||||||||||

| 3. TK – pre[a] | .16 | .36* | – | |||||||||

| 4. TPACK – pre[a] | .33* | .57** | .62* | – | ||||||||

| 5. CK – post[a] | .69** | .35* | .19 | .25 | – | |||||||

| 6. PK – post[a] | .32* | .61** | .30 | .23 | .56** | – | ||||||

| 7. TK – post[a] | .16 | .34* | .88** | .57** | .36* | .40* | – | |||||

| 8. TPACK – post[a] | .28 | .45* | .39* | .37* | .59** | .75** | .50** | – | ||||

| 9. Usefulness[b] | .03 | .08 | .41* | .22 | .-17 | .-11 | .39* | .09 | – | |||

| 10. Integration[b] | .11 | .17 | .48** | .30 | .00 | .09 | .44** | .19 | .59** | – | ||

| * p < .05; ** p < .01 [a] Pearson’s r [b] Spearman’s ρ | ||||||||||||

The results show that all of the knowledge domains are separate. The correlations between the domains are low enough that they can be called separate from each other. The squared correlation coefficients are presented in Table 5. More than half of the beliefs measured by self-assessed knowledge in any domain could not be directly accounted to any other domain, with the only exception being the PK-TPACK (post) correlation. Therefore, the use of, for example, Bonferroni correction when using paired sample t-tests was not necessary. Also, the domains of knowledge were relatively quite stable when the pre- and posttests were compared, especially the CK, PK, and TK domains (see the correlations in Table 4); that is, the same preservice teachers who assessed their knowledge in these domains high in the pretest also assessed it high in the posttest.

Table 5

Squared Correlation Coefficients for the Domains of Knowledge

| Domains of Knowledge | Squared Correlation Coefficient – Pretests | Squared Correlation Coefficient – Posttests |

| CK – PK | .19 | .31 |

| CK – TK | .03 | .13 |

| CK – TPACK | .11 | .35 |

| PK – TK | .13 | .16 |

| PK – TPACK | .32 | .56 |

| TK – TPACK | .38 | .32 |

The means and standard deviations from the pre- and posttests for the different domains of knowledge are presented in Table 6. The metric used to estimate and describe the change in the preservice teachers’ beliefs regarding the different domains of the TPACK framework was Cohen’s d, with the square root of the average of the pre- and posttests’ standard deviations squared as the standardizer (see rationale in Cumming, 2012, p. 291). Cohen’s d values of 0.20 < d < 0.50 represent a small effect, values of 0.50 < d < 0.80 represent a moderate effect, and values 0.80 < d represent a large effect (Cohen, 1988).

Table 6

Results From the TPACK Survey

| Domain of Knowledge / Attitude | Pretest | Posttest | t-value | p-value | Cohen’s d-value | ||

| M | SD | M | SD | ||||

| CK | 3.23 | .90 | 3.65 | .95 | 3.52 | < .001 | .45 |

| PK | 4.23 | .78 | 4.85 | .82 | 5.21 | < .001 | .77 |

| TK | 3.65 | 1.29 | 3.76 | 1.17 | 1.04 | < .15 | .09 |

| TPACK | 2.97 | .81 | 4.20 | .98 | 7.28 | < .001 | 1.37 |

The mean scores for the all of the four TPACK domains were higher on the posttest than on the pretest; TK was the only domain for which the difference was not significant at the .05 level. The effect sizes for the rest of the domains ranged from small to large.

TPACK and View of the Usefulness of Simulations in Science Teaching

The correlations between the beliefs related to different domains of the TPACK framework and the preservice teachers’ view of the usefulness of simulations in science teaching are presented in Table 4. TK was the only domain with a statistically significant correlation at the .05 level with the preservice teachers’ views on the usefulness of simulations in science teaching in the pre- and posttests. The correlation coefficients show a low positive correlation (Hinkle, Wiersma, & Jurs, 2003).

TPACK and Disposition Toward Integrating Simulations Into Science Teaching

The correlations between the beliefs related to different domains of the TPACK framework and the preservice teachers’ disposition toward integrating simulations into their science teaching are presented in Table 4. TK was the only domain with a statistically significant correlation at the .05 level with the preservice teachers’ disposition toward integrating simulations in their science teaching from the pretest and posttest. The correlation coefficients show a low positive correlation (Hinkle et al., 2003).

Study Validity and Limitations

The study group represented about 25% of the annual intake of primary teacher students at the University of Jyväskylä. The age and gender distribution were typical for primary teacher education in Finland. Thus, the results of this study can be representative of the cohort group of the entire primary teacher student population of the university but may not be generalizable across all contexts and populations.

The validity of the survey used in this study cannot be determined using statistical methods due to the small size of the study group. However, the survey was adapted from two validated surveys (Lin et al., 2013; Zelkowski et al., 2013), and it had good reliability (see Table 1). As with all self-report scales, the results may be biased, because the participants of the study may give socially desirable answers. Also, the age and the gender distribution of the participants might have had an effect on their answers.

We do not claim that the TPACK survey used in this study measures objectively what the preservice teachers knew. The self-assessed knowledge was used to study the preservice teachers’ beliefs about their knowledge in the TPACK domains. Preservice teachers can be overconfident about their skills or may lack confidence (Zelkowski et al., 2013).

Also, although attributing the change in self-assessed knowledge to any specific activity is not possible, the participants of the study were engaged in developing and thinking about their technology integration practices in science teaching through planning the lessons and the using simulations in their teaching. The change in their self-assessed knowledge can be reasonably attributed to this activity.

Discussion

The results from the study indicate that the introduction to simulations in science described in this paper had a medium to large effect on the preservice teachers’ beliefs in the CK, PK and TPACK domains of the TPACK framework, which partly confirmed our expectations under Research Question 1. The possible reasons for the change in beliefs in the different domains must be looked at separately.

As a part of the intervention, the preservice teachers had to revise the scientific content relating to the subjects of their lessons, possibly explaining the change in beliefs in the CK domain. The change in beliefs related to the PK domain is interesting, considering the fact that the items in the survey instrument relating to PK were not related to PCK in science but to general PK. The preservice teachers possibly could not distinguish between general PK and PCK relating to science teaching, possibly due to their lack of experience with teaching science.

The change in the beliefs in the TK domain was not statistically significant at the .05 level. This result was to be expected because the focus of the intervention was on ways simulations can be used in science teaching and not in general TK.

The effect size was the largest in the beliefs related to the TPACK domain. This result is encouraging, taking into account the fact that the whole focus of the intervention was to give preservice teachers experience in integrating one application of educational technology into science teaching. The intervention increased the preservice teachers’ beliefs in their ability to plan and execute science lessons that integrate technology.

Preservice teachers’ belief in their TK correlated with their views on the usefulness of simulations in science teaching, which confirmed our expectation under Research Question 2. Their disposition toward integrating simulations into their science teaching also correlated with their belief in their TK, which confirmed our expectation under Research Question 3. Our observations during the intervention on the preservice teachers’ technological skills were that they all possessed the technological skills required to operate computer simulations from a technical viewpoint. The preservice teachers’ attitudes toward simulations may not be linked to the actual presence or lack of technological knowledge and skills required to use the simulations but to the preservice teachers’ conceptions about themselves as users of technology.

With in-service teachers earlier research findings showed a connection between personal interest in technology and successful integration of technology in teaching (Ertmer et al., 2012). Our results support the same connection, because having a personal interest in technology probably increased their belief in their TK. The results of this study add to the literature in connecting preservice teachers’ beliefs about their TK to their attitudes toward technology in teaching.

The fact that the belief in the TK domain correlated with the preservice teachers’ attitude toward simulations and not the TPACK domain provides new support for the previous claims that preservice teachers’ views of their PK are still being formed (Kontkanen et al., 2014; Ozgun-Koca et al., 2010). It might be easier for the preservice teachers to assess their TK than their ability to plan and carry out science lessons in which technology is used appropriately because they lack experience. They probably had not had chances to try out teaching science with technology before the intervention. This would cause the preservice teachers’ assessment of their TPACK to be based more on expectations and assumptions than actual reflection. However, the preservice teachers had a better grasp on their TK because they came in contact with technology every day and, thus, had a chance to form a conception of themselves as users of technology.

Implications

The results show that the belief in the TK domain correlated with the preservice teachers’ attitudes toward simulations in both the pre- and posttests. This result implies that our intervention did not change the fact that the more technologically confident preservice teachers were more positive toward integrating simulations in their teaching and saw simulations as being more useful. Previous research has shown that teachers’ internal factors related to technology integration are hard to change, because they require teachers to confront their existing beliefs and to apply a new view of doing and seeing things to their learning (Polly, Mims, Shepherd, & Inan, 2010). The results from this study supported that claim.

Because preservice teachers’ TK correlates with their attitudes toward simulations, efforts to increase preservice teachers’ TK and self-confidence should be made throughout their teacher training. This strategy may raise the preservice teachers’ beliefs in their TK and improve their attitudes toward simulations.

At the same time, courses dealing with science should include chances to use technology in supporting different pedagogical approaches (Maeng et al., 2013). Zacharia et al. (2011) argued that preservice teachers should be exposed to the learning and teaching benefits of simulations during their teacher training, while also developing the competencies related to teaching science with simulations throughout their studies. In general, a focus on content, pedagogy, and technology at all stages of the teacher training programs would benefit future technology integration (Koehler & Mishra, 2009).

The results of this study and the implications made are reinforced by psychological research on achievement motivation and behavior. Eccles at al. (1983) developed an expectancy-value model of achievement choice originally in order to study children’s and adolescents’ performance and choice in mathematics. The model has developed since then, and the most recent version (Wigfield & Eccles, 2000) has also been used to study learning motivation on adults (Gorges & Kandler, 2012). Among other factors, the model states that self-concept of one’s abilities and sense of importance or utility is related to achievement-related choices.

The results of this study fit that model. In this study, a correlation was found between the preservice teachers’ beliefs about their TK (self-concept of abilities) and both their views on the usefulness of simulation (sense of utility) and their dispositions toward integrating simulations into their science teaching (achievement-related choice). This result combined with the expectancy value-model of achievement emphasized the argument that preservice teachers’ beliefs about their TK should be developed throughout their teacher training in order to encourage them to integrate simulations in science teaching.

Suggestions for Future Research

Conducting interviews with the preservice teachers would give insight into their personal views on technology integration in their teaching. In addition, following the same preservice teachers from their teacher training program to their work as primary school teachers would provide information on the actual integration of simulations into teaching. Using the same study methods with in-service science teachers in a professional development program would enable researchers to study the role of self-assessed knowledge in different domains in using simulations in science teaching.

References

Abbitt, J. T. (2011). An investigation of the relationship between self-efficacy beliefs about technology integration and technological pedagogical content knowledge (TPACK) among preservice teachers. Journal of Digital Learning in Teacher Education, 27(4), 134-143.

Archambault, L. M., & Barnett, J. H. (2010). Revisiting technological pedagogical content knowledge: Exploring the TPACK framework. Computers & Education, 55(4), 1656-1662.

Brantley-Dias, L., & Ertmer, P. A. (2013). Goldilocks and TPACK: Is the construct ‘just right?’ Journal of Research on Technology in Education, 46(2), 103-128.

Carifio, J., & Perla, R. J. (2007). Ten common misunderstandings, misconceptions, persistent myths and urban legends about Likert scales and Likert response formats and their antidotes. Journal of Social Sciences, 3(3), 106.

Carifio, J., & Perla, R. (2008). Resolving the 50‐year debate around using and misusing Likert scales. Medical Education, 42(12), 1150-1152.

Chai, C. S., Koh, J. H. L., & Tsai, C. (2010). Facilitating preservice teachers’ development of technological, pedagogical, and content knowledge (TPACK). Educational Technology & Society, 13(4), 63-73.

Chai, C. S., Ling Koh, J. H., Tsai, C., & Lee Wee Tan, L. (2011). Modeling primary school preservice teachers’ technological pedagogical content knowledge (TPACK) for meaningful learning with information and communication technology (ICT). Computers & Education, 57(1), 1184-1193.

Chen, R. (2010). Investigating models for preservice teachers’ use of technology to support student-centered learning. Computers & Education, 55(1), 32-42.

Cohen, J. (1988). Statistical power analysis for the behavioral sciences (2nd ed.). Hillsdale, NJ: Lawrence Erlbaum Associates.

Cumming, G. (2012). Understanding the new statistics: Effect sizes, confidence intervals, and meta-analysis. New York, NY: Routledge.

Drolet, A. L., & Morrison, D. G. (2001). Do we really need multiple-item measures in service research? Journal of Service Research, 3(3), 196-204.

Eccles, J., Adler, T. F., Futterman, R., Goff S. B., Kaczala, C. M., Meece, J. L., & Midgley, C. (1983). Expectancies, values and academic behaviors. In J. T. Spence (Ed.), Achievement and achievement motivation (pp. 75-146). San Fransisco, CA: W.H. Freeman.

Ertmer, P. A., Ottenbreit-Leftwich, A. T., Sadik, O., Sendurur, E., & Sendurur, P. (2012). Teacher beliefs and technology integration practices: A critical relationship. Computers & Education, 59(2), 423-435.

Gardner, D. G., Cummings, L. L., Dunham, R. B., & Pierce, J. L. (1998). Single-item versus multiple-item measurement scales: An empirical comparison. Educational and Psychological Measurement, 58(6), 898-915.

Gorges, J., & Kandler, C. (2012). Adults’ learning motivation: Expectancy of success, value, and the role of affective memories. Learning and Individual Differences, 22(5), 610-617.

Graham, R., Burgoyne, N., Cantrell, P., Smith, L., St Clair, L., & Harris, R. (2009). Measuring the TPACK confidence of inservice science teachers. TechTrends, 53(5), 70-79.

Herrington, D. G., Bancroft, S. F., Edwards, M. M., & Schairer, C. J. (2016). I want to be the inquiry guy! How research experiences for teachers change beliefs, attitudes, and values about teaching science as inquiry. Journal of Science Teacher Education, 27(2), 1-22.

Hinkle, D. E., Wiersma, W., & Jurs, S. G. (2003). Applied statistics for the behavioral sciences (5th ed.). Boston, MA: Houghton Mifflin.

Hofer, M., & Grandgenett, N. (2012). TPACK development in teacher education: A longitudinal study of preservice teachers in a secondary MA ed. program. Journal of Research on Technology in Education, 45(1), 83-106.

Hofer, M., & Harris, J. (2010). Differentiating TPACK development: Using learning activity types with inservice and preservice teachers. In D. Gibson & B. Dodge (Eds.), Proceedings of the Society for Information Technology & Teacher Education International Conference 2010 (pp. 3857-3864). Chesapeake, VA: Association for the Advancement of Computing in Education.

Jaakkola, T., & Nurmi, S. (2008). Fostering elementary school students’ understanding of simple electricity by combining simulation and laboratory activities. Journal of Computer Assisted Learning, 24(4), 271-283.

Koballa, T. R. J. (1989). Changing and measuring attitudes in the science classroom (Research Matters to the Science Teacher, No. 8901). Athens, GA: The University of Georgia and The National Association for Science Teaching.

Koehler, M. J., & Mishra, P. (2005). What happens when teachers design educational technology? The development of technological pedagogical content knowledge. Journal of Educational Computing Research, 32(2), 131-152.

Koehler, M.J., & Mishra, P. (2009). What is technological pedagogical content knowledge (TPACK)? Contemporary Issues in Technology and Teacher Education, 9(1), 60-70. Retrieved from https://citejournal.org/vol9/iss1/general/article1.cfm

Koehler, M. J., Shin, T. S., & Mishra, P. (2012). How do we measure TPACK? Let me count the ways. In R. N. Ronau, C. R. Rakes & M. L. Niess (Eds.), Educational technology, teacher knowledge, and classroom impact: A research handbook on frameworks and approaches (pp. 16). Hershey, PA: Information Science Reference.

Koh, J. H. L., Chai, C. S., & Tsai, C. (2010). Examining the technological pedagogical content knowledge of singapore pre‐service teachers with a large‐scale survey. Journal of Computer Assisted Learning, 26(6), 563-573.

Kontkanen, S., Dillon, P., Valtonen, T., Renkola, S., Vesisenaho, M., & Väisänen, P. (2014). Preservice teachers’ experiences of ICT in daily life and in educational contexts and their proto-technological pedagogical knowledge. Education and Information Technologies. Advance online publication retrieved from http://link.springer.com/article/10.1007%2Fs10639-014-9361-5

Kriek, J., & Stols, G. (2010). Teachers’ beliefs and their intention to use interactive simulations in their classrooms. South African Journal of Education, 30(3), 439-456.

Lawless, K. A., & Pellegrino, J. W. (2007). Professional development in integrating technology into teaching and learning: Knowns, unknowns, and ways to pursue better questions and answers. Review of Educational Research, 77(4), 575-614.

Lin, T., Tsai, C., Chai, C. S., & Lee, M. (2013). Identifying science teachers’ perceptions of technological pedagogical and content knowledge (TPACK). Journal of Science Education and Technology, 22(3), 325-336.

Maeng, J. L., Mulvey, B. K., Smetana, L. K., & Bell, R. L. (2013). Preservice teachers’ TPACK: Using technology to support inquiry instruction. Journal of Science Education and Technology, 22(6), 838-857.

Mishra, P., & Koehler, M.J.: (2006). Technological pedagogical content knowledge: A framework for teacher knowledge. The Teachers College Record, 108(6), 1017-1054.

NGSS Lead States. (2013). Next generation science standards: For states, by states. Washington, DC: The National Academies Press.

Ozgun-Koca, S. A., Meagher, M., & Edwards, M. T. (2010). Preservice teachers’ emerging TPACK in a technology-rich methods class. Mathematics Educator, 19(2), 10-20.

Pajares, M. F. (1992). Teachers’ beliefs and educational research: Cleaning up a messy construct. Review of Educational Research, 62(3), 307-332.

Polly, D., Mims, C., Shepherd, C. E., & Inan, F. (2010). Evidence of impact: Transforming teacher education with preparing tomorrow’s teachers to teach with technology (PT3) grants. Teaching and Teacher Education, 26(4), 863-870.

Rutten, N., van Joolingen, W., & van der Veen, J. (2012). The learning effects of computer simulations in science education. Computers & Education, 58(1), 136-153.

Ryan, S. (2004). Message in a model: Teachers’ responses to a court-ordered mandate for curriculum reform. Educational Policy, 18(5), 661-685.

Schmidt, D. A., Baran, E., Thompson, A. D., Mishra, P., Koehler, M. J., & Shin, T. S. (2009). Technological pedagogical content knowledge (TPACK): The development and validation of an assessment instrument for preservice teachers. Journal of Research on Technology in Education, 42(2), 123-149.

Schweingruber, H., Keller, T., & Quinn, H. (2012). A framework for K-12 science education: Practices, crosscutting concepts, and core ideas. Washington, DC: National Academies Press.

Stipek, D. J., & Byler, P. (1997). Early childhood education teachers: Do they practice what they preach? Early Childhood Research Quarterly, 12(3), 305-325.

Teo, T. (2009). Modelling technology acceptance in education: A study of preservice teachers. Computers & Education, 52(2), 302-312.

Wanous, J. P., Reichers, A. E., & Hudy, M. J. (1997). Overall job satisfaction: How good are single-item measures? Journal of Applied Psychology, 82(2), 247.

Wigfield, A., & Eccles, J. S. (2000). Expectancy–value theory of achievement motivation. Contemporary Educational Psychology, 25(1), 68-81.

Zacharia, Z. (2003). Beliefs, attitudes, and intentions of science teachers regarding the educational use of computer simulations and inquiry‐based experiments in physics. Journal of Research in Science Teaching, 40(8), 792-823.

Zacharia, Z., Loizou, E., & Papaevripidou, M. (2012). Is physicality an important aspect of learning through science experimentation among kindergarten students? Early Childhood Research Quarterly, 27(3), 447-457.

Zacharia, Z., Rotaska, I., & Hovardas, T. (2011). Development and test of an instrument that investigates teachers’ beliefs, attitudes and intentions concerning the educational use of simulations. In I. M. Saleh, & M. S. Khine (Eds.), Attitude research in science education: Classic and contemporary measurement (pp. 81-115). Charlotte, NC: Information Age Publishing.

Zelkowski, J., Gleason, J., Cox, D. C., & Bismarck, S. (2013). Developing and validating a reliable TPACK instrument for secondary mathematics preservice teachers. Journal of Research on Technology in Education, 46(2), 173-206.

Author Notes

This study was funded by a grant from the Technology Industries of Finland Centennial Foundation.

Antti Lehtinen

University of Jyvaskyla

FINLAND

Email: [email protected]

Pasi Nieminen

University of Jyvaskyla

FINLAND

Email: [email protected]

Jouni Viiri

University of Jyvaskyla

FINLAND

Email: [email protected]

Appendix A

The TPACK Survey

The answers for all the items are given using a 7-point Likert scale (1 = I disagree strongly, 7 = I agree strongly), L = (Lin et al., 2013), Z = (Zelkowski et al., 2013)

CK1: I have sufficient knowledge of science to teach science. L

CK2: I can think about the content of science like a subject matter expert. L

CK3: I have various strategies for developing my understanding of science. Z

CK4: I have a deep and wide understanding of biology. Z

CK5: I have a deep and wide understanding of physics. Z

CK6: I have a deep and wide understanding of geography. Z

CK7: I have a deep and wide understanding of chemistry. Z

PK1: I am able to stretch my students’ thinking by creating challenging tasks for them. L

PK2: I am able to guide my students to adopt appropriate learning strategies. L

PK3: I am able to help my students to monitor their own learning. L

PK4: I am familiar with common student understandings and misconceptions. Z

PK5: I know when it is appropriate to use a variety of teaching approaches (e.g., problem/project- based learning, inquiry learning, collaborative learning, direct instruction) in a classroom setting. Z

PK6: I know how to assess student performance in a classroom. Z

PK7: I can assess student learning in multiple ways. Z

PK8: I can adapt my teaching based upon what students currently understand or do not understand. Z

TK1: I have the technical skills to use technology effectively. L

TK2: I can learn technology easily. L

TK3: I know how to solve my own technical problems when using technology. L

TK4: I keep up with important new technologies. L

TK5: I have had sufficient opportunities to work with different technologies. Z

TK6: I frequently play around with the technology. Z

TK7: I know a lot about different technologies. Z

TK8: When I encounter a problem using technology, I seek outside help. Z (negatively phrased)

TPACK1: I can teach lessons that appropriately combine biology, technologies, and teaching approaches. Z

TPACK2: I can teach lessons that appropriately combine physics, technologies, and teaching approaches. Z

TPACK3: I can teach lessons that appropriately combine geography, technologies, and teaching approaches. Z

TPACK4: I can teach lessons that appropriately combine chemistry, technologies, and teaching approaches. Z

TPACK5: I can provide leadership in helping others to coordinate the use of science, technologies, and teaching approaches in my school and/or district. L

TPACK6: Integrating technology with teaching science will be easy and straightforward for me. Z

Appendix B

Items About Attitudes Toward Simulations

![]()