In the 21st century, mastery of instructional technology is a key element of a successful teacher’s skill set. Many students use technology at home, and technological knowledge is now mandatory for productive participation in society, education, and the workforce. Thus, teachers must be responsible for teaching technology skills alongside their content areas. While it would be unfair to demand that teachers incorporate all computer skills in their classes (particularly, skills like computer programming that require deep conceptual knowledge), teachers can and should teach their content through technology and teach technology through their content.

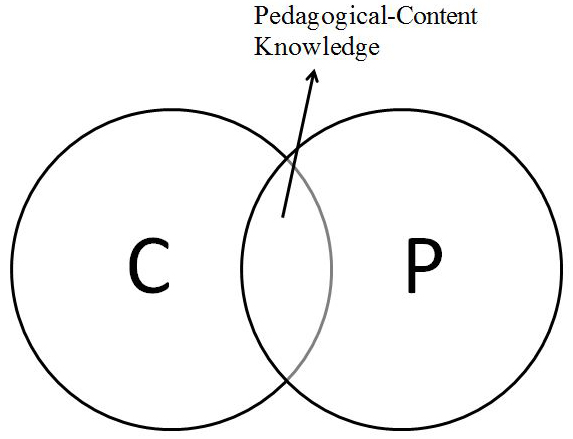

With this concept in mind, the technological pedagogical content knowledge framework (later changed to technology, pedagogy, and content knowledge or TPACK) was described in 2006 by Mishra and Koehler (based upon the prior work of other authors). This framework was itself a revision and extension of the pedagogical content knowledge (PCK) framework, developed in 1986 by Shulman. The original PCK framework asserted that teachers need three types of knowledge to be successful in the classroom (see Figure 1). First, teachers need to know the essential facts and skills of the subject they teach, called content knowledge (CK). Second, teachers need to know skills and strategies that help students learn, called pedagogical knowledge (PK). Finally, teachers need the ability to select appropriate pedagogical strategies to teach their content to the specific audience for the lesson.

This interrelation of content and pedagogy knowledge is termed PCK. According to Mishra and Koehler (2006), “Shulman (1986) argued that having knowledge of subject matter and general pedagogical strategies, though necessary, was not sufficient for capturing the knowledge of good teachers” (p. 1021). Thus, a teacher can have both CK and PK without the essential PCK.

Figure 1. A recreation of Mishra and Koehler’s (2006) model of Shulman’s (1986) PCK framework in “Technological pedagogical content knowledge: A framework for integrating technology in teacher knowledge” by P. Mishra & M. J. Koehler, 2006, Teachers College Record, 108(6), p. 1022. Copyright 2006 by Teachers College, Columbia University.

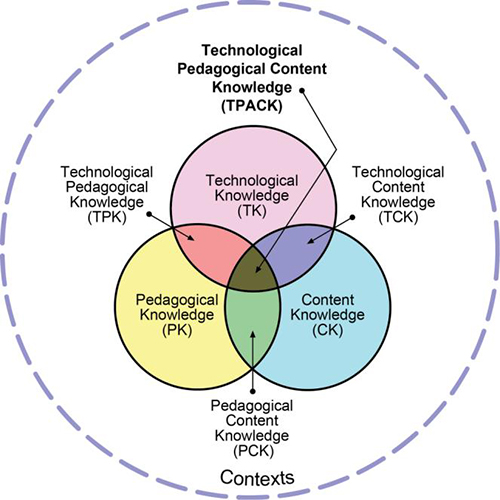

The TPACK model incorporates a third component knowledge domain and three new interrelated knowledge domains (see Figure 2). The T in TPACK stands for technological knowledge (denoted TK). TK refers to a teacher’s knowledge and use of technology on a daily basis, not necessarily connected to contact with students. For example, a teacher who uses e-mail, electronic gradebooks, social networking, or a web profile page has some level of TK.

The first new interrelation, technological content knowledge (TCK), refers to a teacher’s awareness and use of content-appropriate technology. For example, a mathematics teacher may use a graphing calculator or statistical software to demonstrate a concept within a lesson. The second new interrelation, technological pedagogical knowledge (TPK), encompasses a teacher’s ability to teach technology skills to students and expose students to technology in the classroom. In this domain, teachers select appropriate resources and strategies to develop their students’ technology skills, but they may do so in a way that is divorced from the core content of the class. This knowledge domain also includes passive exposure to technology, such as displaying content with an LCD projector. In such situations, while the teacher selected an appropriate technological resource for a teaching purpose, students were not instructed in how to use the technology.

The final interrelation, technological pedagogical content knowledge, describes a teacher’s ability to plan lessons and select appropriate technological resources and pedagogical strategies with technology to teach students relevant content. When TPACK is evidenced, the technology used in the lesson complements and enhances the students’ understanding of the content, instead of just being an add-on. When the teacher is skilled with TPACK, students also have the opportunity to learn how to use technology relevant to the content while also gaining general technological skills and concepts that they may transfer to other content areas and use in everyday life.

Figure 2. The new TPACK framework. Reproduced by permission of the publisher, © 2012 by tpack.org.

Current Models of TPACK

TPACK has been widely discussed and debated in the literature of educational research. While most scholars agree that the attainment of TPACK is a worthy goal for teachers, the question of how to get there remains unanswered.

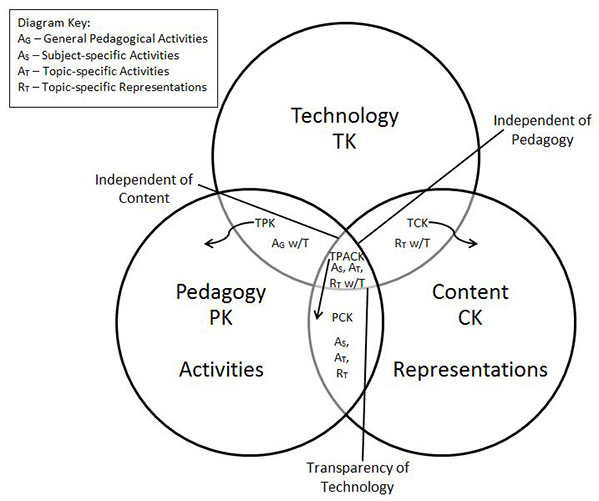

One of the first enhancements to the TPACK framework was to clarify the perceived fuzzy boundaries between some of the knowledge constructs (Cox & Graham, 2009). Building on the work in Cox’s (2008) doctoral dissertation, Cox and Graham (2009) used the process of conceptual analysis to create “an elaborated model of the TPACK framework that elucidates the essential features and boundaries between each of the constructs” (p. 61). They first used flowchart-like graphic organizers to categorize the various components of a lesson. They then placed the categories generated by the analysis into each region of their Venn diagram. By doing so, they indicated representative actions that teachers could take to demonstrate their knowledge and skills within each domain (see Figure 3). The goal of the elaborated model is to classify specific teacher actions, not teachers themselves.

Figure 3. A recreation of the elaborated TPACK model in A conceptual analysis of technological pedagogical content knowledge (Unpublished doctoral dissertation) by S. Cox, 2008, Brigham Young University, Provo, UT.

Next, several scholars examined the teacher perception of the “path” to TPACK using quantitative surveys to measure teacher self-perception of their knowledge and skills in each domain (e.g., Angeli & Valanides, 2009; Archambault & Barnett, 2010; Chai, Koh, & Tsai, 2010; Koh & Chai, 2011; Koh, Chai, & Tsai, 2010, 2013; Schmidt et al., 2009; Shinas, Yilmaz-Ozden, Mouza, Karchmer-Klein, & Glutting, 2013). The goal of these studies was to determine which knowledge domains teachers perceived as having the most influence on developing their TPACK.

The first survey created was the Survey of Preservice Teachers’ Knowledge of Teaching and Technology, developed by Schmidt et al. (2009). This survey was widely adapted and modified in many of the other studies previously referenced. However, two significant inconsistencies and disputes developed within the literature.

The first dispute was over the nature of the knowledge domains themselves. Initially, Mishra and Koehler (2006) used the term interrelational to refer to the domains formed by the combination of two or more separate knowledge domains. However, two differing perspectives on these domains have emerged in the literature. An integrative model of TPACK presupposes that these interrelational knowledge constructs are supported by the component knowledge areas while remaining separately intact and distinct. Scholars using this integrative model often refer to these domains as intersectional rather than interrelational. Cox (2008), in her elaborated TPACK model, made the implicit assumption that that the original TPACK model was integrative, since she assigned teacher behaviors and strategies to all areas of the Venn diagram.

This model, whether stated explicitly or not, dominated the early scholarly discourse on TPACK. However, scholars such as Angeli and Valanides (2009) and Archambault and Barnett (2010) began to question this perspective. Alternatively, they viewed TPACK to be a transformative model. They claimed that two component knowledge areas blend to create an interrelational knowledge domain which cannot be unwoven afterwards.

The difference between the integrative and the transformative models is significant, because “for the researchers who study…the transformative model of TPACK, which suggests that TK, CK, and PK cannot be separated when they form TPACK, Cox and Graham’s definition of TPACK in the TPACK framework is limited” (Canbazoglu Bilici, Yamak, Kavak, & Guzey, 2013, p. 41). Angeli and Valanides (2009) provided further evidence for the transformative model by refuting the claim “that growth in any of the related constructs (i.e., content, technology, pedagogy) automatically contributes to growth in [TPACK]” (p. 158).

Finally, Shinas et al. (2013) acknowledged “the possibility of adopting a transformative perspective to the examination of TPACK as a unique knowledge body that is more than the sum of its parts” (p. 357). No consensus can yet be found in the literature about which approach to the interrelational knowledge areas of the TPACK framework has more validity. As a result, there is a lack of consistent terminology in the literature. In this paper, to be viewpoint neutral, we will use the term interrelational except when describing actual intersections on a diagram.

Neither the integrative model nor the transformative model necessarily indicates which knowledge areas have the largest impact on a teacher’s TPACK, which leads to the second inconsistency. Little consensus exists regarding the degree to which each knowledge construct impacts a teacher’s actual or perceived TPACK. Different scholars have suggested that certain factors have no negligible impact on teachers’ perceptions of their own TPACK. Schmidt et al. (2009) found a significantly positive correlation between PCK and TPACK, but Koh, Chai, and Tsai (2013) claimed that teachers did not perceive either CK or PCK to significantly influence their TPACK.

Furthermore, validity outcomes for surveys were inconsistent between studies: for example, Schmidt et al. (2009) validated all seven knowledge domains, but Chai, Koh, and Tsai (2010) validated only four factors in the model. Noting this issue with the previous work on the TPACK framework, Shinas et al. (2013) performed a large study with a population of American preservice teachers using the Survey of Preservice Teachers’ Knowledge of Teaching and Technology developed by Schmidt et al. (2009). However, they were forced to conclude, “This body of work, including the study reported in this manuscript, has failed to establish a satisfactory acceptable level of discriminant validity for the TPACK constructs” (Shinas et al., 2013, p. 356).

These different results and interpretations of them may stem from the researcher’s belief in the integrative model versus the transformative model; as Canbazoglu Bilici et al. (2013) observed: “These mixed results are closely related to the TPACK models (transformative vs. integrative) used by the researchers to develop the existing surveys” (p. 50). Nevertheless, while the analysis of the results of these surveys did not lead to definitive conclusions about the nature of TPACK, each study evidenced some measure of validity. Thus, such surveys are still useful as one data point to measure an individual teacher’s TPACK.

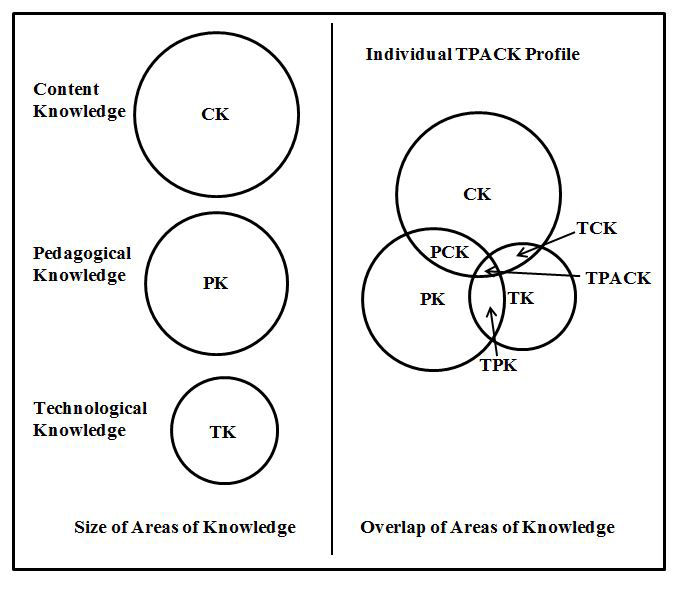

Some scholars have attempted to use a qualitative approach for measuring a teacher’s TPACK instead of a quantitative approach. One particular study is significant in this regard, as the researchers used a qualitative approach while attempting to alter the visual representation of the TPACK model to better reflect a specific teacher’s TPACK knowledge profile. Kushner Benson and Ward (2013) studied TPACK measures for three instructors in an online, higher education environment. Using qualitative data, such as the course syllabus, participant interviews, and online discussion board postings, the researchers created three different-sized circles to represent each teacher’s CK, PK, and TK. They then placed the circles to create intersections that measured relative strengths in the corresponding interrelational knowledge areas (see Figure 4).

Although this novel approach creates a viable snapshot of a teacher’s TPACK profile at any given moment, it is difficult to implement for a larger sample of teachers. Additionally, no units or standardization govern the size of the circles, so only relative size is apparent, and comparing different TPACK profiles or evaluating growth over time is difficult. Furthermore, while individual circles and intersections may grow in size, as the size of the intersections grows, the total area of the model may decrease. This mathematical consequence may lead to a false visual impression that the teacher’s total knowledge has declined over time. While the concept was laudable, the specific implementation is too problematic to be viable.

Figure 4. A recreation of the sample TPACK profile of one of the subjects in “Teaching with technology: Using TPACK to understand teaching expertise in online higher education” by S. N. Kushner Benson & C. L.Ward, 2013, Journal of Educational Computing Research, 48(2), p. 166. Copyright 2013 by Baywood Publishing Co., Inc.

Moving Toward a Visual and Quantitative Model

The goal of our study was to find a way to represent visually a particular teacher’s knowledge profile within the TPACK model after quantifying the teacher’s knowledge level in each of its seven domains. Many different instruments and approaches to assessing this knowledge exist, both quantitative and qualitative. We take no position on which approach or combination of approaches is best, but we wish to develop a representation that can be used to better track a preservice or in-service teacher’s knowledge growth in each area. Whether the interrelational knowledge domains keep the component knowledge domains separate or constitute a transformation of the core domains should not impact the assessment of skills and abilities in each domain. Furthermore, tracking their growth in each of the knowledge areas is still worthwhile for teachers, even if that factor does not directly contribute to TPACK.

Coauthor Colvin was first exposed to the TPACK framework in a course he was enrolled in taught by coauthor Tomayko. The assignment he was given was to watch a video (Kimmons, 2011) that explained the framework and to self-assess his knowledge in each area. While the video provided a useful overview of the different knowledge domains and explained the interrelations with examples, the use of a Venn diagram confused him.

As a math teacher, when Colvin uses Venn diagrams in his classroom, he expects students to categorize items in each section of the diagram. He thought that this model was asking him to categorize himself as one of TK, PK, CK, TPK, PCK, TCK, or TPACK. In his self-reflection, he recognized that he had strengths and growth opportunities in all seven of the domains, and placing himself into one of the areas on the Venn diagram would not reflect that he had some skills in the other areas. Furthermore, the model implied that each knowledge domain was binary: one either had the knowledge or not.

He attempted to attach coordinates to each of the three individual knowledge areas of the diagram to plot himself more specifically, but he realized that this approach would still categorize him in one of the regions of the diagram. Furthermore, having good knowledge in each of the component knowledge areas individually does not imply having good knowledge in the interrelational knowledge domains. Using a Venn diagram for the TPACK framework is appropriate for explaining the different knowledge areas and their interrelations, but a Venn diagram is a poor model for evaluating teacher skills and knowledge. While individual teacher actions, skills, and knowledge can be categorized into one of the seven domains on the diagram, the teacher as a whole cannot be. As a result, teachers may experience confusion ascertaining how they “fit into” the TPACK framework and how they might use it to target their professional growth.

Development of TPACK Radar Diagrams

With these concerns in mind, we propose a new representation of a TPACK profile that has the following characteristics:

- It visually represents a teacher’s knowledge in all seven domains.

- Greater total area represents growth over time.

- It is quantitatively based (even if the data gathered is qualitative).

- It is understandable to preservice and in-service teachers so that they can use it to plan their continuing education needs.

- It is understandable to administrators and teacher coaches so that it can be used to provide actionable feedback to teachers.

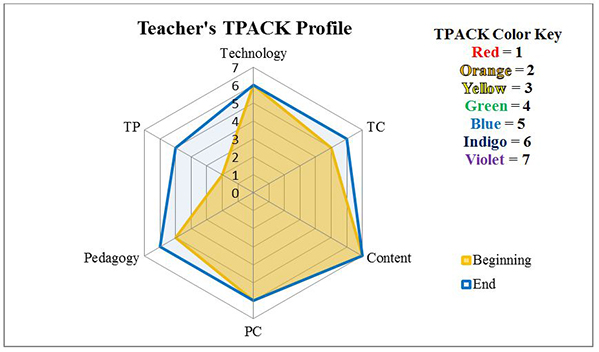

Figure 5 shows an example of a particular teacher’s TPACK profile at the beginning and conclusion of an instructional technology methods class.

Figure 5. A sample teacher’s TPACK profile at the beginning and end of a class.

Figure 5. A sample teacher’s TPACK profile at the beginning and end of a class.

In the TPACK radar diagram, the bands from 1 to 7 represent a Likert scale as follows:

- 1 – I possess/demonstrate no knowledge/skills in this area

- 2 – I possess/demonstrate minimal knowledge/skills in this area

- 3 – I possess/demonstrate developing knowledge/skills in this area

- 4 – I possess/demonstrate satisfactory knowledge/skills in this area

- 5 – I possess/demonstrate proficient knowledge/skills in this area

- 6 – I possess/demonstrate advanced knowledge/skills in this area

- 7 – I possess/demonstrate exemplary knowledge/skills in this area

In Figure 5, the top vertex of the hexagon represents the teacher’s TK rating, the bottom right vertex represents the teacher’s CK rating, and the bottom left vertex represents the teacher’s PK rating. The intermediate vertices represent the interrelational knowledge domains for the two adjacent vertices (e.g., the upper right vertex represents the teacher’s TCK rating). Finally, the teacher selects the color of the hexagon to represent the teacher’s TPACK domain score, where the colors in rainbow order correspond to the ratings 1 through 7 on the Likert scale.

This radar diagram representation of a teacher’s TPACK profile was designed specifically to meet our criteria. Under this model, teachers understand that they possess some degree of knowledge in each of the seven domains, and their knowledge in each domain can be evaluated separately. If a Likert scale questionnaire is used to give teachers more specific examples of knowledge and skills essential to each domain, teachers can easily average their scores across the domain to come up with a single number to plot in the diagram. Furthermore, an observer can use qualitative data sources like classroom observations, interviews, and lesson plans to rate the teacher in each domain. The visual shows teachers where they have grown and where they need to grow further in the future. The ultimate goal is to fill the outer hexagon and color it violet.

One caveat is that a teacher may self-evaluate lower in a given knowledge area after being exposed to further knowledge and skills about it. In this case, the teacher’s knowledge has not regressed; rather, the teacher has a better awareness and understanding of the domain itself. Thus, teachers may revise their earlier self-evaluation to what they now understand to be a more appropriate level. Furthermore, when using qualitative data, the ratings are evidence based, so a lower rating may correspond to a lack of evidence presented for a particular domain in a specific lesson or observation. We do not propose a specific evaluative tool or set of tools to assess a teacher’s TPACK. However, the TPACK radar diagram provides an easily understandable output for any suitable TPACK evaluation tool.

It is important to note that the Likert scale used to report ratings in each domain is nonspecific and somewhat subjective. Exact descriptors for what constitutes satisfactory knowledge and skills as opposed to proficient knowledge and skills within a domain would necessarily vary among the content areas and grade levels taught. Ideally, an observer using this scale would provide clarifications to the scale that were specific to the observed teacher’s grade level and content area in order to make the ratings more useful and actionable for the teacher. Additionally, subject- and grade-level specific questionnaires can still categorize each question by the domain targeted. Thus, this more general visual representation of a TPACK profile remains understandable and useful to all teachers, but details can be fleshed out for specific teachers customized to their specific teaching assignment.

Pilot Study of TPACK Radar Diagrams

Research Questions

Although the TPACK radar diagrams have several advantages in alleviating confusion surrounding the TPACK framework for preservice teachers, we wanted to ensure that they communicated a fundamental understanding of the framework in an accurate manner. Therefore, we designed a study to investigate two questions. First, does exposure to TPACK radar diagrams improve preservice teachers’ understanding of the TPACK framework? Second, do preservice teachers who have been exposed to TPACK radar diagrams have a better understanding of their immediate next steps for gaining knowledge and skills for classroom success?

Method

Twenty-five undergraduate students in a mathematics and science teacher preparation program at a large public university in the mid-Atlantic area of the United States were asked to watch one of two videos explaining the TPACK framework. Afterwards, students were asked to write a short reflection about their understanding of the framework based on the video they saw. Students were given the same writing prompts regardless of the video they watched.

We then analyzed the written reflections for evidence of understanding the framework and discussion of specific next steps students could take to improve their efficacy while still preservice teachers. Responses were scored using two rubrics, and the resulting data were disaggregated and analyzed to determine if exposure to a TPACK radar diagram influenced understanding of the framework or impacted plans for future development.

Participants. All students applying to the university’s teaching program are required to take a sophomore-level pedagogy course that builds on previous methods classes, which require fieldwork in elementary and middle school classrooms. Thus, students in this course were aspiring teachers in secondary science or mathematics, were majoring in one of these areas, and had some prior exposure to education coursework. Coauthor Tomayko taught two sections of this class in the spring semester of 2014, and the 25 students enrolled in either section of the course were the subjects. Students were informed that completing the reflection would count as one of the 10 reflection assignments that contributed to their final grade for the course.

One student was removed from the study for failing to submit a reflection, so the final number of participants was 24. Out of these 24 participants, 19 were female and 5 were male. In addition, 13 were mathematics majors and 11 were science majors. Table 1 summarizes the relevant demographics of the subjects in the study.

Table 1

Demographics of Students by Treatment Group

Demographic | Group A | Group B |

| Gender | ||

| Female | 9 | 10 |

| Male | 3 | 2 |

| Major | ||

| Mathematics | 7 | 6 |

| Science | 5 | 6 |

| Total | 12 | 12[a] |

| [a]There were originally 13 subjects in group B, but one student did not submit a reflection and was dropped from the study. | ||

Data Collection. Each student watched one video describing the TPACK framework by random assignment. Group A watched a video (Video 1) that we created (Colvin, 2014), while group B watched a video describing the original TPACK framework (Kimmons, 2011). The video we created used nearly the same narration as the other video, but it showed how teachers might rate themselves in each domain on a Likert scale and plot this information on a hexagonal TPACK radar diagram.

Video 1. TPACK 2.0 (https://www.youtube.com/watch?v=-wCW4dJB81g)

After watching their assigned video, the students generated written reflections about their exposure to TPACK by responding to the items, “Explain this framework in your own words,” and “Describe how you fit in this framework,” which they submitted to us.

Data Analysis. This pilot study employed mainly qualitative methods to help ascertain answers to the research questions. However, the qualitative data were quantified using a rubric in order to aid with comparative analysis between the two treatment groups. Afterwards, quotations from the qualitative data were specifically analyzed to supplement the quantitative findings. As Strauss and Corbin (1998) pointed out, “Qualitative analysis…refer[s] not to the quantifying of qualitative data” (p. 11), and therefore, our approach is not truly qualitative. However, because of our small sample size (n = 24) and the fact that this new representation of TPACK was entirely untested, it was necessary and appropriate to use both quantitative and qualitative evidence to support our answers to the research questions.

Once all students submitted their written reflections, both authors independently evaluated the reflections in two categories (Understanding the TPACK Framework and Understanding Next Steps) with the 3-point rubric shown in Figure 6. The authors then compared ratings and reread reflections together to reconcile any discrepancies in scoring. A final score for each student for each part of the rubric was assigned jointly.

0 points | 1 point | 2 points | 3 points | |

| Understanding the TPACK Framework | ||||

| The student had significant errors in their understanding of the TPACK framework OR demonstrated no understanding whatsoever. | The student was able to name and correctly describe some of the TPACK areas. | The student was able to name and correctly describe all of the different TPACK areas and TPACK itself. | The student was able to name, describe, and give examples of all of the different TPACK areas, including TPACK itself. | |

| Understanding Next Steps | ||||

| The student did not mention any next steps. | The student mentioned future growth, but gave no specific next steps. | The student mentioned specific next steps. | The student mentioned specific next steps and connected those next steps to the TPACK framework. | |

| Figure 6. Rubric for scoring reflections. | ||||

In analyzing the reflections, we looked for specific evidence of understanding the TPACK framework and being able to pinpoint concrete next steps to take to improve knowledge and skills within the TPACK framework’s knowledge domains. Our hypothesis was that preservice teachers who were exposed to TPACK radar diagrams would have a better understanding of the general TPACK framework and would be more able to identify weaknesses in their teacher preparation. They should also be able to more easily identify specific steps they could take to address those weaknesses before beginning their teaching service.

Findings

After analyzing the scores of each part of the rubric separately, we calculated the results displayed in Tables 2, 3, and 4.

Table 2

Distribution of Rubric Scores by Treatment for Understanding the TPACK Framework

Rubric Score | Group A | Group B |

| 0 points | 0 | 2 |

| 1 point | 7 | 6 |

| 2 points | 4 | 4 |

| 3 points | 1 | 0 |

| Mean Score (n = 12 for both) | 1.500 | 1.167 |

| Standard Deviation | 0.645 | 0.687 |

While the distribution of scores is similar in both groups, as evidenced by the close standard deviation scores, we found it most striking that no one in group A received a 0 on the rubric, whereas two subjects in group B received a 0. Also, one subject in group A was able to achieve a perfect rubric score, while no subject in group B managed to do so. Keeping in mind the small sample size (n = 24 overall, with 12 students in each group), we concluded that the video showing the TPACK radar diagram was at least as effective in explaining the TPACK framework to preservice teachers as the video of the original Venn diagram model. Thus, while we could not definitively state that the radar diagrams were more effective at explaining the TPACK framework than the Venn diagram representation, they would at least not be detrimental to preservice teachers in secondary mathematics or science.

Table 3

Distribution of Rubric Scores by Treatment for Understanding Next Steps

Rubric Score | Group A | Group B |

| 0 points | 2 | 2 |

| 1 point | 6 | 6 |

| 2 points | 3 | 4 |

| 3 points | 1 | 0 |

| Mean Score (n = 12 for both) | 1.250 | 1.167 |

| Standard Deviation | 0.829 | 0.687 |

In analyzing the data for how well student reflections demonstrated an understanding of their next steps, a key feature of the new representation, we found that the distribution of scores was almost identical between the two treatment groups. Though the mean for treatment group A was higher, this was due solely to the one student who received a perfect score in this area of the rubric. Additionally, this one student’s score also caused the standard deviation of group A to be much higher than the standard deviation of group B. Thus, the gap in standard deviations was much greater than in the previous score distribution. Because the sample size was so small (n = 24), we were unable to conclude that there was any discernible difference in preservice teachers being able to articulate steps to improve their skills under the TPACK framework between the two treatment groups. However, we believe that the methodology of the study contributed to this result in two ways.

Discussion

Limitations

Several limitations to our pilot study hindered our ability to answer the research questions definitively. The small sample size (n = 24) did not permit us to determine if the differences observed between the treatment groups were significant. Additionally, the participants were all mathematics or science majors applying to a secondary teacher preparation program. As a result, we were unable to evaluate the effectiveness of the new representation for preservice teachers in other content areas or grade-levels.

A further limitation of the pilot study became apparent after we analyzed the data. First, the reflection prompt did not ask students specifically about next steps. We stated the prompt purposefully to promote spontaneous, rather than guided, reflection on what preservice teachers should do with the information they were presented. However, by not asking the students to consider future professional development specifically in their reflections, we were unable to successfully compare the two treatment groups in this area.

Second, in order to minimize the effect of differing narration of the videos, the script for the group A video was created to be nearly identical to the script of the group B video. Thus, the video script may have contributed to overall low scores in the category of Understanding the TPACK Framework, and our reliance on another’s previously created video was partially responsible for this limitation. However, the qualitative evidence supports our claim that the TPACK radar diagram model benefits preservice teachers in understanding both the framework and their next steps.

As evidence that the video script played a key role in student reflections, we examined the breakdown of scores in both rubric categories by student major (Figure 4).

Table 4

Average Rubric Scores for Framework and Next Steps by Treatment Group and Major

Rubric Score | Group A | Group B |

| Average Framework | ||

| Mathematics | 1.286 (n = 7) | 1.000 (n = 6) |

| Science | 1.800 (n = 5) | 1.333 (n = 6) |

| Average Next Steps | ||

| Mathematics | 1.000 (n = 7) | 1.000 (n = 6) |

| Science | 1.600 (n = 5) | 1.333 (n = 6) |

Though the sample sizes were small, we noticed that subjects majoring in science (n = 11) had noticeably higher scores on the rubric than the subjects in the same treatment group majoring in mathematics (n = 13). Part of the reason for this difference was may have been that the narration script of both videos gave specific examples from science (along with language arts and social studies) but made no mention of mathematics or examples from that field. Since students were given points on the rubric for mentioning specific examples, the inclusion of such examples may have prompted the science majors more clearly than the mathematics majors. In fact, we found that some of the science students used examples paraphrased or quoted verbatim from the video in their reflections.

Implications

The qualitative evidence we gathered from the reflections supports the need for a better model of the TPACK framework, and the evidence suggests that TPACK radar diagrams may fit the needs of preservice teachers. In analyzing the reflections, it is important to note that the video was the students’ first exposure to the TPACK framework; as such, we found that many students used “TPACK” to refer to both the knowledge domain and the overall model in their reflections. While this improper use of terminology could be construed as a lack of understanding of TPACK, we believe this is another consequence of the video script, and not of the model.

A common theme from the reflections gathered from treatment group A was that the TPACK radar diagram model was about growth and improvement. One female mathematics major wrote, “Overall, a TPACK is a way to see what needs to be worked on and what is working well to analyze the steps that must be taken to be more successful in the workplace.” A female science major echoed this sentiment by writing, “Overall, the TPACK is a method in which educators can evaluate their effectiveness as a teacher and see in which areas they need to improve.” Finally, a male mathematics major wrote in his reflection that, “TPACK shows where one needs to strengthen their abilities as well as where one might have the most success in combining their abilities.”

In contrast, some students in treatment group B mentioned growth, but they were less able to connect it to their current effectiveness. For example, one female science major wrote that she “believe[s] that mastery of such a framework can only be achieved through education, practice, and time.”

While there may have been some cross-contamination of the treatment groups (possibly caused by classmates in different treatment groups discussing the videos they watched with each other before writing their reflections), students in treatment group B seemed to demand in their reflections a better way of representing their fit in the TPACK framework. One female science major stated explicitly in her reflection, “Personally, I am not sure that I fit anywhere in this framework as of now.” A female mathematics major also commented that she “fit partially within each category.”

Some students even took it upon themselves to quantify their fit in the TPACK framework. A different female mathematics major wrote, “If I were to assign numbers to each of the areas, I would give myself six for technology, five for pedagogy, and three or two for content.” While this student did not say what scale she was using, she clearly indicated that the categorization in the Venn diagram was not working for her. Finally, one female science major wrote, “My TPACK is a tiny little dot that has so much growing to do.” It is clear that this student would benefit from a visual model of her growth in teaching skills over time.

Conclusion and Next Steps

Reflecting upon the results of the pilot study, we determined that the TPACK radar diagram model was useful to preservice secondary teachers in mathematics and science. However, we also realized some potential refinements to the video and reflection questions were needed. First, because the original video script did not meet the needs of the TPACK radar diagram model, we will develop and field test two new videos (one using the original TPACK Venn diagram and the other using a TPACK radar diagram) to explain the models. Second, the reflection questions need modification to ensure they encourage students to discuss the specific next steps for professional development. However, we must be cautious not to lead participants to any particular answer. We hope that these changes to the videos will allow preservice and in-service teachers across content areas and grade levels to better understand and use TPACK radar diagrams.

After these revisions, we plan to conduct a larger study to determine whether or not TPACK radar diagrams promote better understanding of the TPACK framework for preservice and in-service teachers in a variety of content areas and grade levels.. This study would employ mixed methods to gather longitudinal data about the participants and use multiple data sources (e.g., qualitative sources such as questionnaires, lesson plans, and observations; quantitative sources such as surveys and self-assessments) to develop more accurate TPACK profiles of the participants.

By providing teachers with a model for tracking and assessing their knowledge, skills, and daily lesson preparation within a framework that incorporates technological instruction, we hope to raise awareness about how these domains impact classroom instruction on a daily basis, as well as promote increased awareness of developing teachers’ responsibility to provide authentic and content-specific technology instruction.

References

Angeli, C., & Valanides, N. (2009). Epistemological and methodological issues for the conceptualization, development, and assessment of ICT–TPCK: Advances in technological pedagogical content knowledge (TPCK). Computers & Education, 52(1), 154-168.

Archambault, L. M., & Barnett, J. H. (2010). Revisiting technological pedagogical content knowledge: Exploring the TPACK framework. Computers & Education, 55(4), 1656-1662.

Canbazoglu Bilici, S., Yamak, H., Kavak, N., & Guzey, S.S. (2013). Technological pedagogical content knowledge self-efficacy scale (TPACK-SeS) for preservice science teachers: Construction, validation and reliability. Egitim Arastirmalari-Eurasian Journal of Educational Research, 52, 37-60.

Chai, C. S., Koh, J. H. L., & Tsai, C.-C. (2010). Facilitating preservice teachers’ development of technological, pedagogical, and content knowledge (TPACK). Journal of Educational Technology & Society, 13(4), 63-73.

Chai, C. S., Koh, J. H. L., & Tsai, C.-C. (2011). Exploring the factor structure of the constructs of technological, pedagogical, content knowledge (TPACK). The Asia-Pacific Education Researcher, 20(3), 595-603.

Colvin, J. C. (2014, April 14). TPACK 2.0 [YouTube video]. Retrieved from https://www.youtube.com/watch?v=-wCW4dJB81g

Cox, S. (2008). A conceptual analysis of technological pedagogical content knowledge (Unpublished doctoral dissertation). Brigham Young University, Provo, UT.

Cox, S., & Graham, C. R. (2009). Diagramming TPACK in practice: Using an elaborated model of the TPACK framework to analyze and depict teacher knowledge. TechTrends, 53(5), 60-69.

Kimmons, R. (2011, March 22). TPACK in 3 minutes [YouTube video]. Retrieved from http://www.youtube.com/watch?v=0wGpSaTzW58

Koh, J. H. L., & Chai, C. S. (2011). Modeling pre-service teachers’ technological pedagogical content knowledge (TPACK) perceptions: The influence of demographic factors and TPACK constructs. In G. Williams, P. Statham, N. Brown, B. Cleland (Eds.), Changing demands, changing directions: ascilite 2011 proceedings (pp. 735-746). Hobart, TAS: University of Tasmania.

Koh, J. H. L., Chai, C. S., & Tsai, C-C. (2010). Examining the technological pedagogical content knowledge of Singapore pre-service teachers with a large-scale survey. Journal of Computer Assisted Learning, 26(6), 563-573.

Koh, J. H. L., Chai, C. S., & Tsai, C-C. (2013). Examining practicing teachers’ perceptions of technological pedagogical content knowledge (TPACK) pathways: A structural equation modeling approach. Instructional Science, 41(4), 793-809.

Kushner Benson, S. N., & Ward, C. L. (2013). Teaching with technology: Using TPACK to understand teaching expertise in online higher education. Journal of Educational Computing Research, 48(2), 153-172.

Mishra, P., & Koehler, M. J. (2006). Technological pedagogical content knowledge: A framework for integrating technology in teacher knowledge. Teachers College Record, 108(6), 1017-1054.

Schmidt, D. A., Baran, E., Thompson, A. D., Mishra, P., Koehler, M. J., & Shin, T. S. (2009). Technological pedagogical content knowledge (TPACK): The development and validation of an assessment instrument for preservice teachers. Journal of Research on Technology in Education, 42(2), 123–149.

Shinas, V. H., Yilmaz-Ozden, S., Mouza, C., Karchmer-Klein, R., & Glutting, J. J. (2013). Examining domains of technological pedagogical content knowledge using factor analysis. Journal of Research on Technology in Education, 45(4), 339–360.

Shulman, L. S. (1986). Those who understand: Knowledge growth in teaching. Educational Researcher, 15(2), 4-14.

Strauss, A., & Corbin J. (1998). Basics of qualitative research: Techniques and procedures for developing grounded theory (2nd ed.). Thousand Oaks, CA: SAGE Publications.

Author Information

Julien C. Colvin

Towson University

Email: [email protected]

Ming C. Tomayko

Towson University

Email: [email protected]

![]()